Technical SEO Signals That Matter Most in Google’s AI Era

Key Takeaways

- Technical SEO gives Google confidence in your site, helping real customers actually reach and benefit from your content.

- Crawlability and index quality decide whether search engines reliably find, understand, and keep surfacing your most important pages.

- Structured data and clear entities reduce guesswork, making pages easier for AI systems to reference accurately and safely.

- Fast, stable experiences guided by Core Web Vitals protect attention, strengthen engagement, and support more resilient long-term rankings.

- Thoughtful internal linking turns scattered pages into topic journeys, guiding people and algorithms toward clearer, better-informed decisions everywhere.

- Mobile-first performance and design shape the primary version of your site that Google indexes, evaluates, and presents widely.

- Clean architecture, consistent signals, and honest rendering create foundations that future content, campaigns, and experiments can reliably extend.

- Treating technical SEO as ongoing maintenance, not a project, keeps visibility, trust, and revenue aligned as search evolves.

Ranking today isn’t just about publishing content and building links. In Google’s AI-driven search environment, technical signals determine whether your pages are even considered for visibility. If a website cannot be crawled efficiently, rendered correctly, or structured in a way search engines understand, even high-quality content may struggle to appear in search results. Backlinko’s study of roughly 4 million results shows the #1 organic result gets a 27.6% CTR, while only 0.63% of searchers click anything on page two.

On top of that, more and more decisions never reach your website at all. Semrush reports that 58.5% of U.S. searches in 2024 ended without a single click, with AI Overviews and rich SERP features answering questions directly on the results page. That means the sites with a clean structure, clear entities, and strong AI-driven SEO signals are surfaced within those experiences; everyone else becomes invisible background noise. This isn’t about hacks. It’s about being the easiest possible site to crawl, parse, and reuse.

For technical SEO 2026, think infrastructure, not tricks. Your job is to build a machine-readable environment that supports smart content, rather than band-aiding bad structure with last-minute fixes. Brands that treat AI-era visibility as AI search optimization prioritize crawl paths, markup, performance, and internal architecture. Do that well, and every blog, landing page, and product detail you ship has a far better chance of being trusted by humans and algorithms.

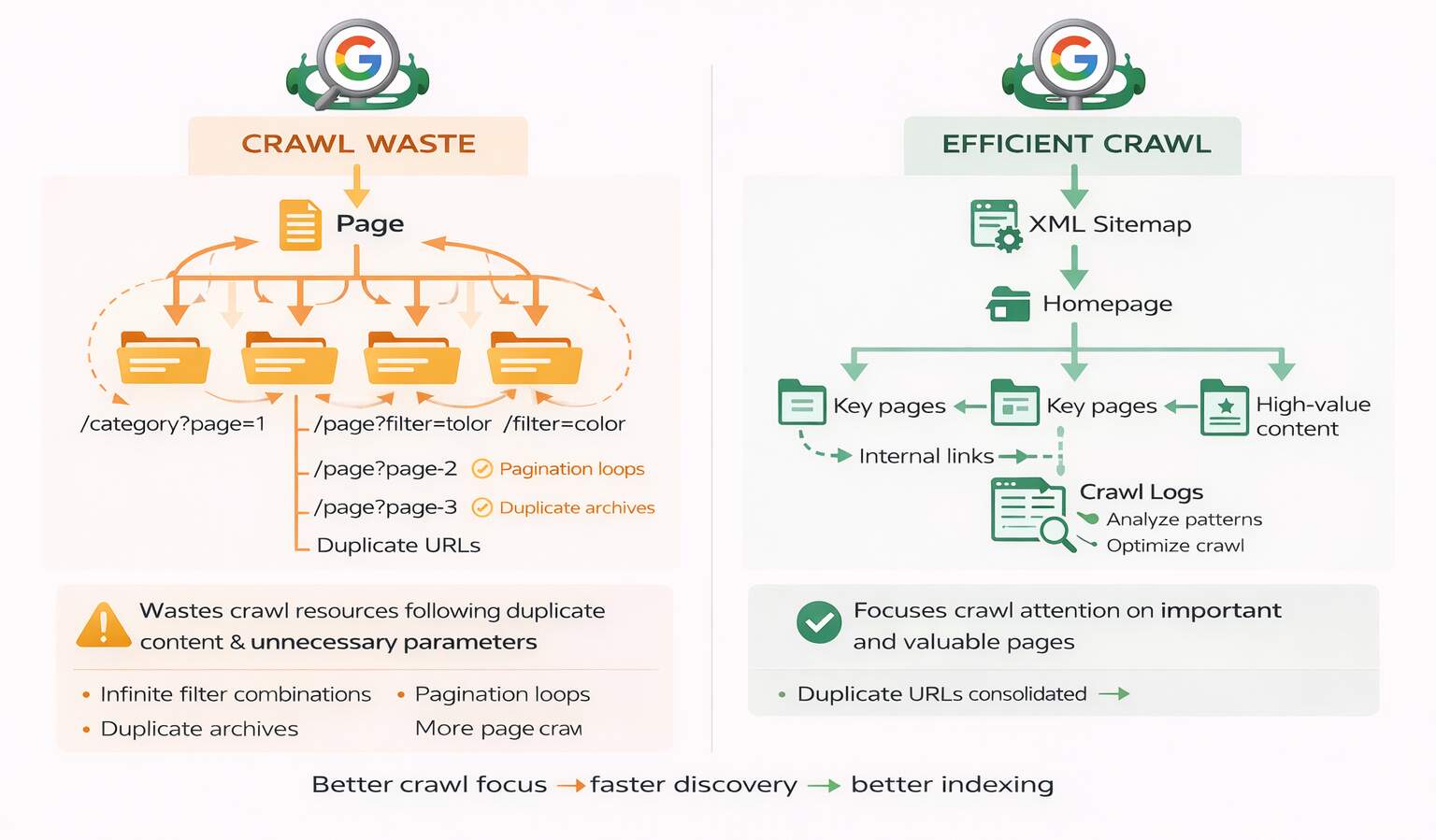

Can Google Crawl Without Wasting Energy?

Google does not crawl your website with endless patience or unlimited resources. If bots keep landing on duplicate pages, weak archives, broken paths, or messy parameter URLs, you are wasting crawl attention on content that adds no real value. That becomes a problem because the more time Google spends sorting through noise, the less efficiently it can discover, refresh, and prioritize the pages that actually matter for search visibility.

What helps most:

- Reduce duplicate and parameter-heavy URLs so crawlers are not sent through endless versions of the same page.

- Keep your XML sitemap focused on clean, indexable, high-value pages instead of dumping in everything your CMS generates.

- Strengthen internal linking to key pages so important content is easy to reach and clearly signaled as valuable.

- Review crawl patterns through logs or audits to spot where Googlebot is wasting time.

When crawl paths are clean, Google spends less energy filtering clutter and more energy understanding your strongest content. That is the real win. Better crawling is not some technical vanity metric. It directly affects how consistently your important pages get found, revisited, and taken seriously by search engines.

Are Pages Indexed Or Just Reachable?

A page can be easy to crawl and still not qualify for indexing. Indexing acts as a quality filter, and search engines rely on indexation signals to determine whether a page deserves to appear in search results. Thin tag pages, slight city or product variations, and low-value archives dilute the strength of your overall library. When you add conflicting canonicals, mismatched sitemaps, and sloppy internal links on top, you end up sending mixed technical SEO signals and essentially dare Google to guess which URL you actually care about. When systems have to guess, they rarely choose the version you’d pitch in a meeting.

Index bloat is usually self-inflicted. Many sites unintentionally create large numbers of filter pages, archives, and internal search URLs. While this increases the number of indexed pages, it rarely improves visibility. Instead, it spreads ranking signals across low-value pages, weakening the overall quality of the index. It doesn’t. It just drags down the average quality of what you’re offering. Smart crawlability optimization accepts that not every URL should compete in organic search. Removing or no indexing weak pages lets Google focus its evaluation and crawl resources on your best work instead of your leftovers. A leaner index also makes your site look more like a curated library and less like a random content dump.

Canonicals are supposed to be the referee, but they only work when the rest of your setup backs them up. If the canonical tag points to one URL, internal links push another, and the sitemap lists a third, you’ve turned a simple hierarchy into an argument. The fix is boring but crystal clear: pick one canonical per intent and align links, sitemaps, and redirects around it. Once everything points in the same direction, your technical SEO signals stop contradicting each other and start backing one strong, authoritative version.

Index quality list

- Export all indexed URLs; tag filters, archives, and duplicates for cleanup.

- Consolidate near-duplicate pages into a single, clear canonical per topic.

- Limit the sitemap to pages you’d be happy to walk a high-value prospect through.

Index Quality Comparison Table

| URL Type | Current Count | Ideal Range | What It Tells Google | Fix Priority |

| High-intent pages | 80 | 120–150 | Focused, but underserving | Medium |

| Thin/tag/archive pages | 260 | < 50 | Bloated, low-value index | Very High |

| Parameter/search URLs | 140 | 0–10 | Wasted crawl, noisy signals | High |

| Total indexed URLs | 480 | ~200 | Quantity over clarity | High |

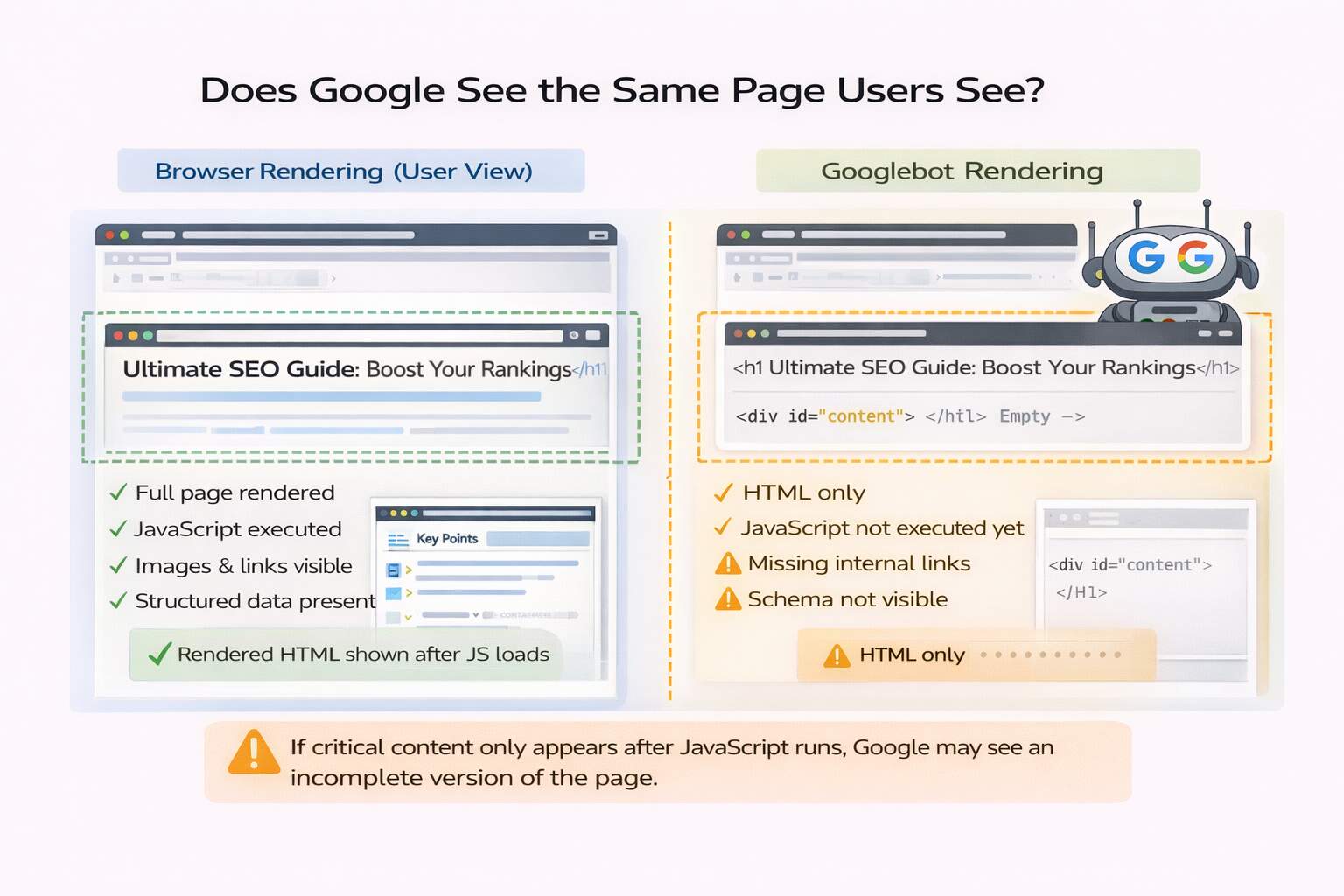

Does Google See the Same Page Users See?

On modern sites, rendering is the real gatekeeper. A page that appears complete in a browser may still present an incomplete version to search engines if important content, links, or structured data load only after JavaScript execution or user interaction. That’s a serious problem in a world where Google AI ranking factors put a lot of weight on clarity and reusability. When the rendered HTML is thin, your technical SEO signals effectively say “uncertain source,” so AI systems are less likely to quote or surface you, even if human visitors eventually see a beautiful, fully loaded version.

The safer approach is to make sure your main story lives in HTML before any fancy scripts run. Headings, core copy, primary internal links, and markup should all be visible to a non-JavaScript client. That’s where semantic HTML structure still quietly pulls its weight. If critical information only appears after complex front-end components load, search engines may struggle to interpret the page correctly. Prioritizing accessible HTML structure ensures that both users and crawlers can immediately understand the page’s core message. AI can’t reliably reuse what it can’t reliably render, and you don’t get bonus points for clever front-end engineering that hides meaning from crawlers.

Instead of trusting how the site feels on your laptop, test the way a bot experiences it. Use tools that render HTML, compare the “view source” to what Google actually sees, and look for blocked or missing resources. If critical sections vanish when JavaScript fails, that’s not a tiny edge case—that’s a structural flaw. Fixing those gaps is one of the quickest ways to strengthen AI-driven SEO signals without touching a sentence of copy. The goal isn’t to ban JavaScript; it’s to make sure that even if everything dynamic broke tomorrow, the page would still make sense.

Render reality check

- Capture rendered HTML for key URLs and confirm all critical content appears.

- Refactor components that hide core text or links behind interactions.

- Make sure resources needed for rendering aren’t blocked or misconfigured.

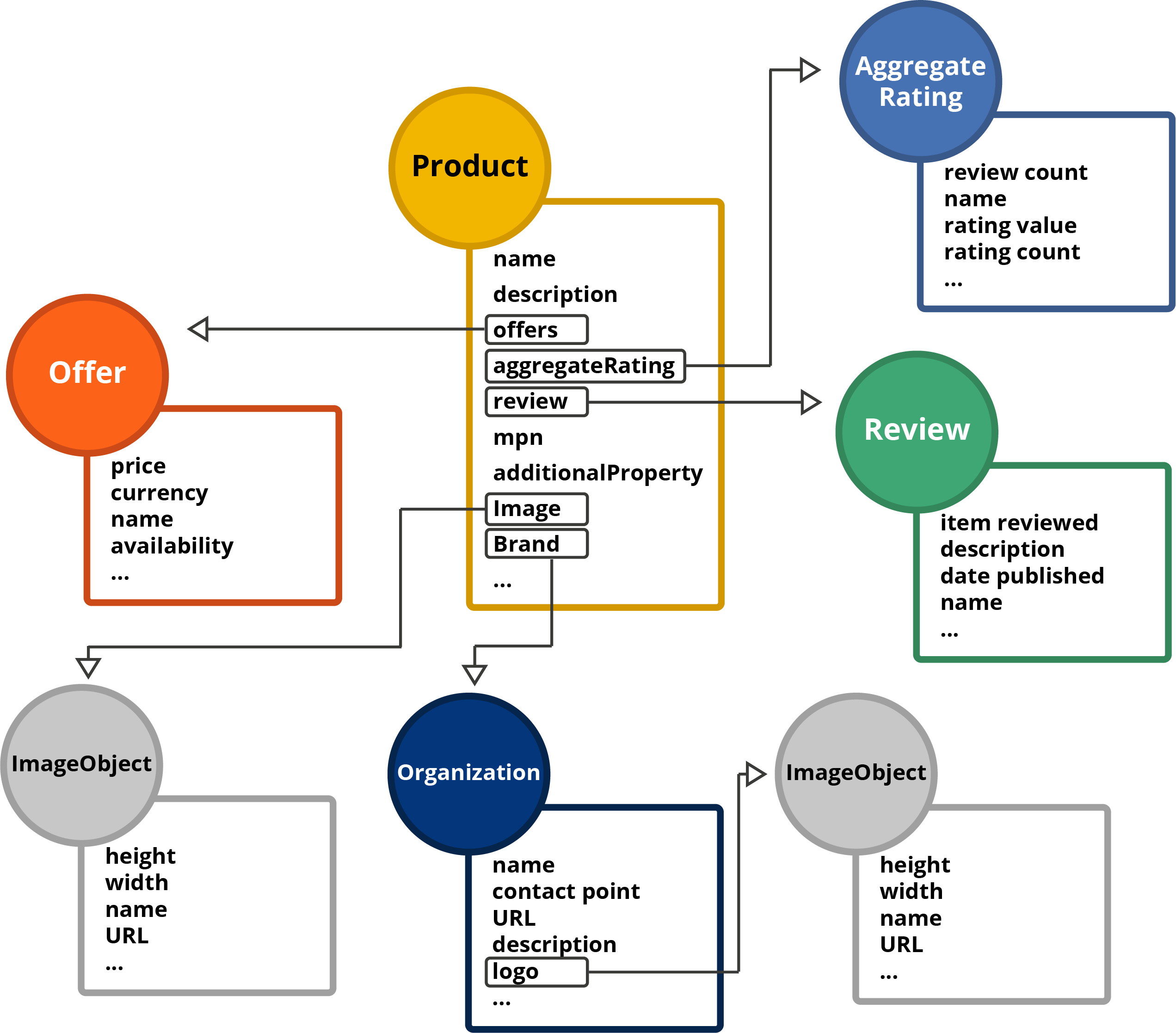

Can Machines Understand Without Guessing?

Search engines and AI systems are advanced, but they still work best when your website makes meaning obvious. If your content is loosely organized, your markup is inconsistent, or essential details are buried behind scripts and weak formatting, machines are forced to fill in gaps on their own. That is risky, because when systems have to guess, they are more likely to misunderstand what your page is about, what it offers, and why it deserves visibility.

What makes interpretation easier:

- Use focused structured data that clearly explains the page type, main entity, and important content signals.

- Match schema to visible content so there is no disconnect between markup and what users actually see.

- Keep key information available in HTML instead of hiding meaning behind JavaScript-heavy experiences.

- Use clean headings and logical content hierarchy so important ideas are easy to identify and summarize.

The goal is not to impress machines with complexity. The goal is to remove ambiguity. When your page structure, schema, and visible content all reinforce the same message, search engines do not need to guess what matters. That clarity improves interpretation, trust, and the likelihood that your content will be surfaced accurately in search and AI-driven environments.

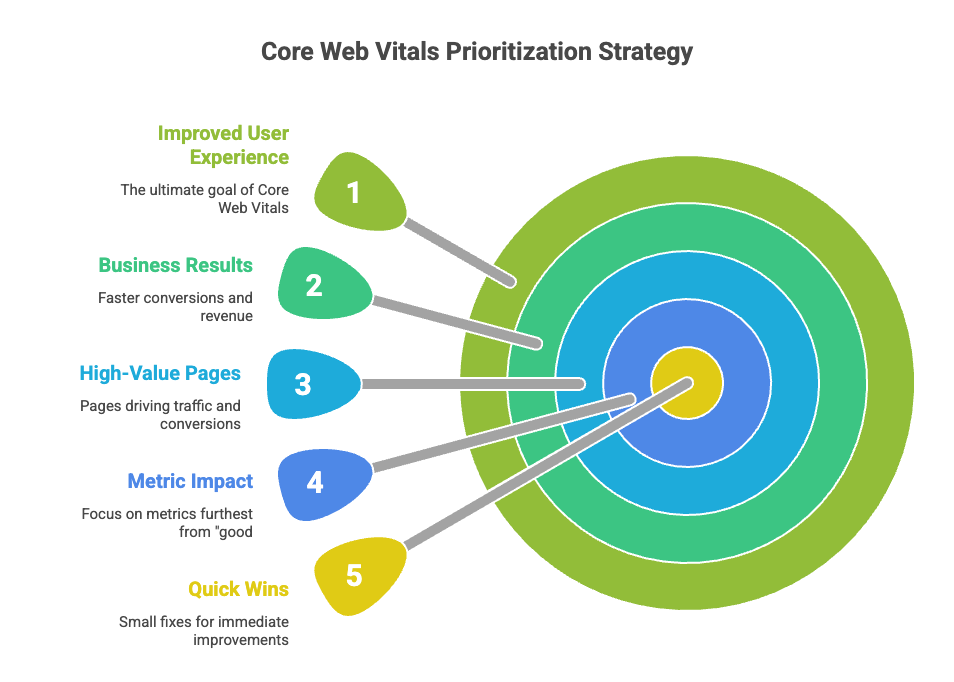

Is Your Site Fast, Stable, and Friendly?

Page speed and stability are essential components of modern search performance and user experience. Google’s Core Web Vitals guidelines suggest aiming for LCP ≤ 2.5s, INP ≤ 200ms, and CLS ≤ 0.1 at the 75th percentile of page views. When you hit those marks, the experience feels dependable; when you don’t, it feels sloppy. That perceived reliability feeds into page experience ranking considerations and into broader technical SEO signals, indicating to AI systems that your site is low-friction. Slow, unstable pages don’t just annoy users, they actively waste your visibility opportunities.

Responsiveness is where many sites quietly fall behind. CrUX data behind INP thresholds shows that only a minority of popular phone origins reliably hit the 200ms “good” threshold, and performance worsens as pages become more complex. Meanwhile, user patience is brutally short: several studies show that around 53% of mobile users bail out if a page takes longer than three seconds to load. If your page “looks loaded” but feels sluggish every time someone taps, scrolls, or types, users—and any Google AI ranking factors tied to engagement—will move on without ceremony.

Mobile isn’t a segment anymore; it’s the default context. More than half of global web traffic now comes from mobile devices, and bounce rates can jump by 30% or more as load time increases from 1 to 3 seconds. For technical SEO 2026, the to-do list is straightforward even if the work isn’t: strip out heavy third-party scripts, compress and modernize images, optimize fonts, and keep layouts stable while assets load. Tackling the single worst performance offender on each high-value page often does more for real users—and for search—than chasing tiny gains across everything at once.

Performance leak map

- Identify your top 10 organic + revenue landing pages.

- For each one, decide whether LCP, INP, or CLS is the main problem.

- Fix that one metric first (images, JS bloat, fonts, or layout shifts).

Same Language Everywhere You Operate?

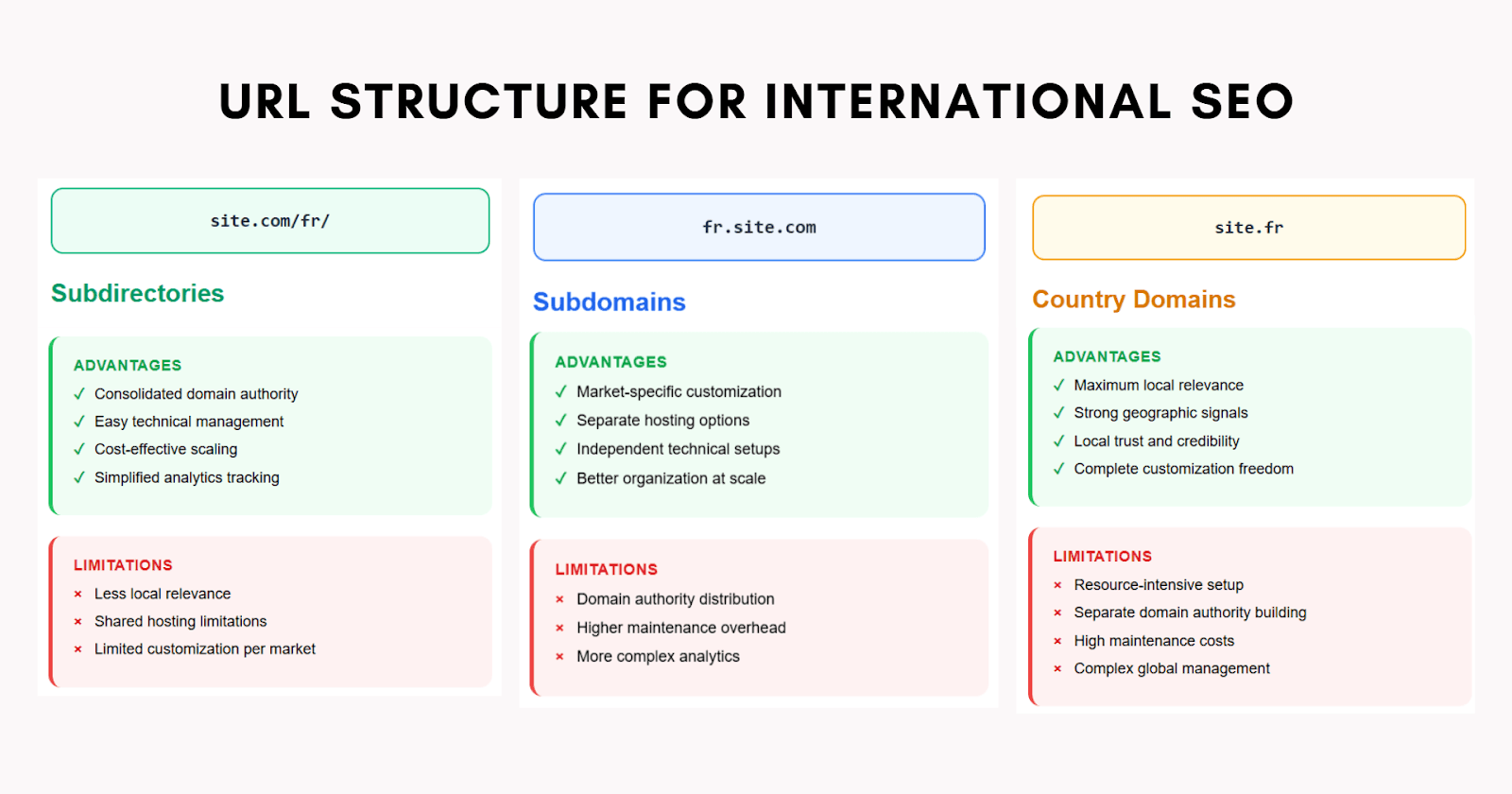

International SEO often looks fine on the surface while quietly failing beneath the surface. A brand may have localized pages, translated content, and separate regional URLs, yet still send mixed signals to search engines and users. When that happens, the wrong page can rank in the wrong market, users can land on content that feels out of place, and trust drops fast. That is why international SEO is not just about translation. It is about making every regional version technically clear, locally relevant, and consistent in how it communicates intent.

What keeps regional SEO clean:

- Use a consistent URL structure across countries and languages so regional targeting is easy to understand.

- Maintain correct hreflang tags to help search engines match each page with the right audience.

- Localize copy, proof, and currency so the page feels genuinely built for that specific market.

- Audit international signals regularly because one broken tag or mismatch can create wider confusion.

When regional pages are properly structured and genuinely localized, they stop feeling like copied variations of the same page. They start feeling intentional and trustworthy. That matters because search engines want confidence in your targeting, and users want confidence that they are in the right place. Strong international SEO earns both at the same time.

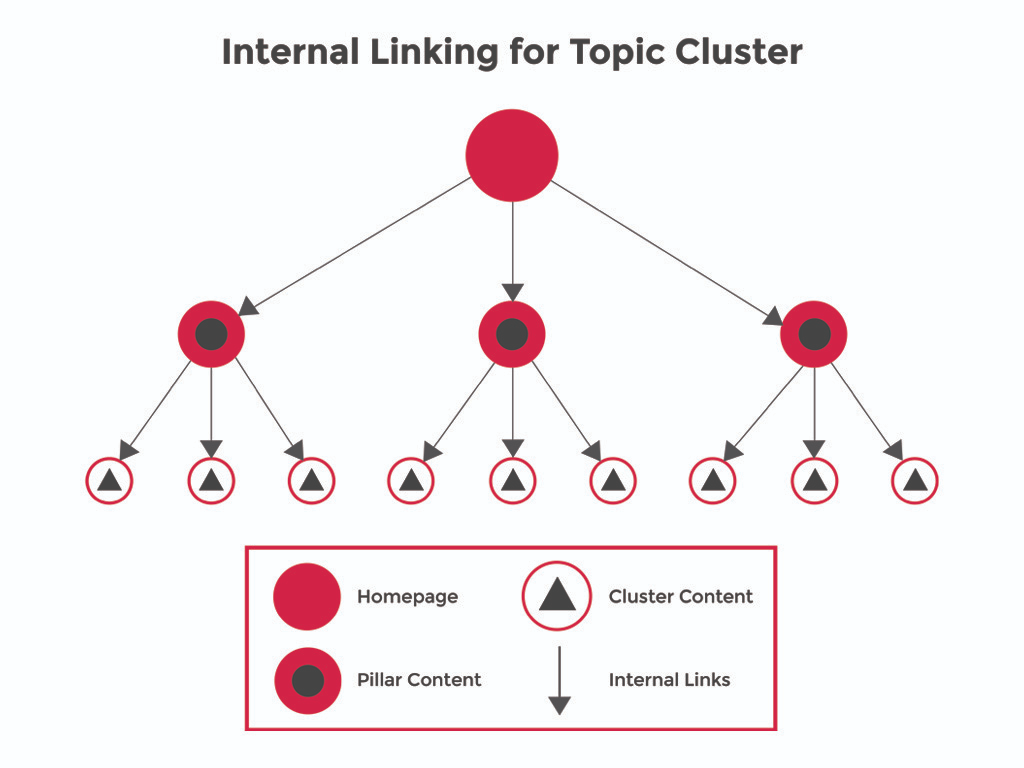

Is Internal Linking Guiding The Algorithm?

Internal links help search engines understand how different pages on your site relate to each other and which topics your site covers in depth. When hub pages, in-depth articles, and decision-stage pages all connect logically, you’re sketching a clear, crawlable map of your expertise. That map is one of the strongest AI-driven SEO signals you control: it shows which topics you cover deeply and how someone should move from “just researching” to “ready to choose.” Without it, even great content looks like isolated islands,and isolated islands send very weak technical SEO signals about real authority.

The place to start is usually not glamorous: fix orphan pages and tame noisy links. Orphans—pages with zero or one internal link, almost never perform, no matter how optimized the on-page content is. Pages stuffed with dozens of random links lose focus. A stronger internal linking structure guides users from educational content to comparison resources and finally to decision or conversion pages. That pattern not only matches how people decide, but also reinforces site architecture SEO, because related topics naturally cluster rather than being thrown into a flat heap.

Breadcrumbs and contextual links behave like a two-engine system. Breadcrumbs quietly answer “where am I on this site?” while in-text and end-of-page links answer “what’s the smartest thing to look at next?” Over time, those patterns make your domain a safer bet when systems assemble answers, overviews, and recommendations—that’s the compounding effect of smarter AI search optimization. If navigation feels clean and obvious to humans, there’s a very good chance you’ve also made life easier for crawlers, which is exactly what you want.

Link cleanup sprint

- Find pages with zero or one internal link and connect them to relevant hubs.

- Turn top guides into mini-hubs with 3–5 focused “next step” links.

- Add breadcrumbs to core templates and walk real users through their journeys to see how they feel.

Conclusion: AI Visibility via Technical SEO

Under every consistently high-performing site in Google’s AI era, you’ll find the same thing: a clean, disciplined technical foundation. You don’t need secret algorithm intel; you need crawl paths that don’t waste budget, an index full of pages you’re proud of, markup that tells a consistent story, performance that respects people’s time, and internal structures that make your expertise obvious. Those are the technical SEO signals AI systems quietly reward over and over again.

If your content is strong but visibility remains inconsistent, the issue is often technical infrastructure rather than content quality. Fixing that isn’t glamorous, but it’s exactly what unlocks everything else. If you want a team to tear into the messy details and hand back a site that machines actually enjoy crawling, understanding, and ranking, book a technical SEO audit with eSign Web Services and turn AI-era search from a headache into an advantage.

Frequently Asked Questions (FAQs)

Question: What’s the #1 technical SEO priority in the AI era?

Answer: Index quality is the top priority because AI-era search depends on clean, reliable sources. If your index is bloated with thin pages, duplicates, parameter URLs, or near-identical variants, Google wastes crawl budget and loses confidence in what matters. A focused index helps important pages get crawled more often, understood faster, and selected more consistently for rankings and AI-driven summaries. Start by consolidating duplicates, noindexing low-value pages, and aligning canonicals, sitemaps, and internal links.

Question: How does structured data influence AI-generated search results?

Answer: Structured data improves machine understanding by providing explicit context about content type, authorship, organization identity, products, and relationships between entities. While schema markup does not directly increase rankings, it acts as a retrieval qualifier in AI-driven systems. When Google’s generative models extract information for summaries, structured clarity improves interpretation accuracy. Rich results and enhanced listings often depend on correct markup implementation. Proper schema alignment strengthens credibility and increases the probability of inclusion within AI Overviews and knowledge panels. However, structured data must reflect visible content accurately to maintain trust and avoid algorithmic inconsistencies or penalties.

Question: Is JavaScript SEO still risky in Google’s AI era?

Answer: It can be. Google can render JavaScript, but rendering is resource-intensive and not always consistent across sites, templates, and crawl states. If your main content, internal links, or structured data only appear after delayed scripts or user interactions, Google may index an incomplete version. That hurts both rankings and AI reuse. Reduce risk by ensuring critical content appears in initial HTML or renders reliably, keeping resources crawlable, and validating with rendered HTML checks in Search Console.

Question: Does mobile-first indexing impact AI search inclusion?

Answer: Yes, mobile-first indexing directly affects AI interpretation and inclusion. Google primarily evaluates the mobile version of a website for crawling, indexing, and ranking purposes. If mobile pages lack structured data, contain hidden content, or load slowly, AI systems may misinterpret context or reduce visibility. Responsive design and consistent mobile content ensure clarity across devices. Mobile usability also influences engagement metrics, which indirectly affect algorithmic trust. Optimizing layout, speed, and readability for smaller screens protects ranking stability and strengthens inclusion potential within AI-generated search summaries and traditional search results alike.

Question: How does internal linking influence AI understanding?

Answer: Internal linking strengthens contextual clarity by connecting related pages through descriptive anchor text and logical hierarchy. Search engines use internal links to interpret topical relationships and distribute authority across the site. Clear linking structures reinforce entity recognition and thematic depth. Poor linking creates orphan pages and weakens crawl efficiency. Strategic interlinking improves discoverability and enhances contextual mapping for AI-driven interpretation models. By organizing content into clusters with coherent internal pathways, websites strengthen authority signals and increase the likelihood of inclusion within both traditional rankings and generative search summaries.

Question: What role does site architecture play in AI-era SEO?

Answer: Site architecture organizes content into logical categories and subcategories, helping search engines interpret thematic depth and expertise. Clean hierarchical structure strengthens crawl efficiency and reduces confusion in large websites. When topics are grouped into coherent silos, AI systems recognize subject authority more effectively. Poor architecture creates duplication, weak linking patterns, and diluted ranking signals. Structured organization improves user navigation and reinforces contextual clarity. Strong architecture therefore supports both technical efficiency and authority recognition, enhancing visibility across evolving search interfaces powered by AI interpretation systems.

Question: Why is internal linking more important for AI-driven search?

Answer: Internal linking teaches search engines how your topics connect. In AI-driven systems, understanding relationships between pages strengthens contextual authority. A clear structure—hub pages linking to detailed subtopics and then to decision pages—creates semantic clarity. Without strong internal pathways, pages feel isolated and harder to evaluate. Breadcrumbs, contextual links, and consistent anchor text help machines interpret topic depth and expertise, improving crawl flow and increasing the likelihood of being surfaced for relevant queries.

Question: How often should technical SEO audits be conducted?

Answer: Technical SEO audits should be conducted quarterly for stable websites and more frequently during major updates, redesigns, or migrations. Regular audits identify crawl errors, broken links, performance declines, and schema inconsistencies before they impact rankings. Monitoring Core Web Vitals and indexation status ensures ongoing compliance with evolving search standards. AI-driven systems continuously refine interpretation models, making proactive maintenance essential. Scheduled evaluations reduce risk of unnoticed technical degradation. Consistent audits protect ranking stability, support authority growth, and maintain optimal visibility across traditional and generative search results.

Question: What is the biggest technical SEO mistake in Google’s AI era?

Answer: The biggest mistake is treating technical SEO as a one-time checklist instead of ongoing infrastructure management. Many websites implement basic optimization during launch but fail to monitor performance, indexation, and structural consistency regularly. Neglecting schema accuracy, internal linking updates, and performance optimization reduces long-term authority. AI-driven search systems reward consistent clarity and structural integrity. Technical precision supports content credibility and inclusion probability. Sustainable SEO success requires continuous monitoring, refinement, and alignment with evolving algorithmic standards rather than static implementation practices.